-

- [Research] Professor Woo Simon’s Research Lab Publishes a Paper in The AAAI 2022

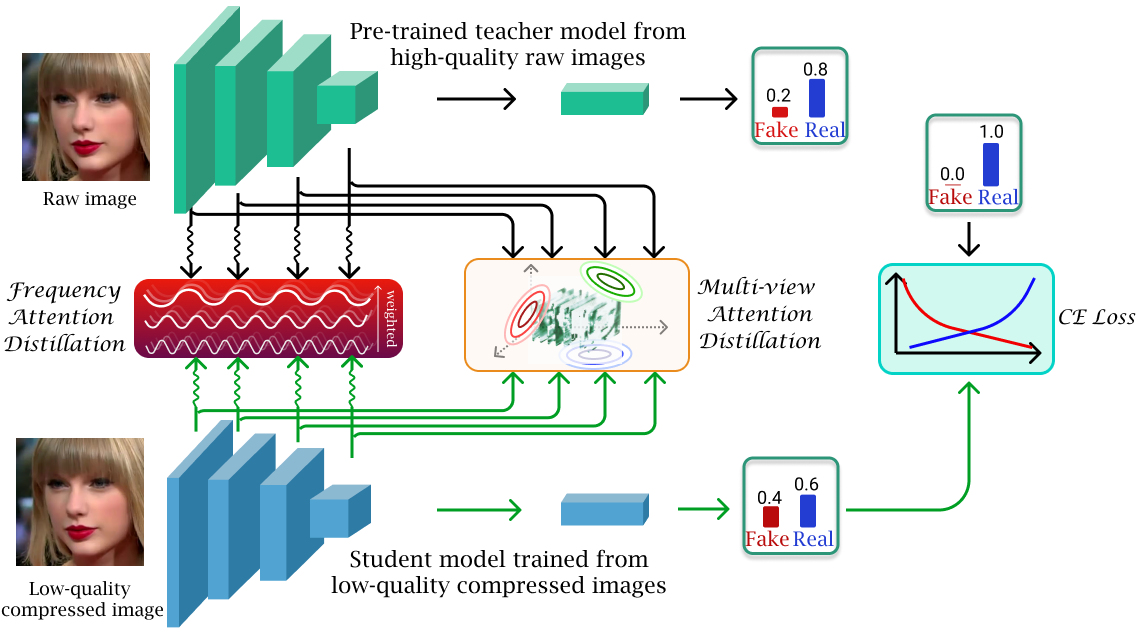

- Research work from Professor Simon S. Woo and his Data-driven AI Security HCI (DASH Lab) research lab's student Binh M. Le was accepted in top tier multimedia computer science conference, Thirty-Sixth AAAI Conference on Artificial Intelligence, (Acceptance Rate = 15%, BK IF = 4). The work will be presented in February 2022 in Vancouver, Canada. In this work, the authors propose a novel method to detect low-quality compressed deepfake images. It utilized the theories of Optimal Transportation, Frequency Domain learning, and Knowledge Distillation to transfer representations from a teacher model that is pre-trained on high-quality images to a student model that is trained to detect low-quality compressed images. The authors argue that low-quality images bring two main challenges for a detection model: the loss of high-frequency information and the loss of correlation in a compressed image. Thereafter, they proposed a novel Attention-based Deepfake detection Distillations, exploring frequency attention distillation and multi-view attention distillation in a Knowledge Distillation (KD) framework to detect highly compressed deepfakes. The frequency attention helps the student to retrieve and focus more on high-frequency components from the teacher. The multi-view attention, inspired by Sliced Wasserstein distance, pushes the student's output tensor distribution toward the teacher's, maintaining correlated pixel features between tensor elements from multiple views. In the experiment, the authors using different benchmark datasets and validate the effectiveness of their proposed method in comparing with many previous state-of-the-art detection models.

-

- 작성일 2021-12-07

- 조회수 1010

-

- [Research] Prof. Yuseong Kim's Lab/Spectrum Challenge Awarded 1st Prize

- The Electronics and Telecommunications Research Institute (ETRI) held the Spectrum Challenge to research and develop core technologies using radio waves that enable various new wireless services to coexist. In radio wave utilization improvement technology, eight university teams competed with the theme of 'Finding an efficient communication method using reinforcement learning in a multi-frequency channel sharing network environment.' CSI Lab is composed of Sungkyunkwan University Ph.D. Jung-in Park, and Professor Yoo-Seong Kim. (Computer Systems and Intelligence Lab) the team won 1st place. CSI Lab won first place in the 2020 Spectrum Challenge, achieving the feat of winning first place for two consecutive years. The Spectrum Challenge is a foundation for actively responding to paradigm shifts in the global use of frequencies, a key resource in the 4th industrial revolution and constituting a hyper-connected society, and conducting the spearheading mission of developing core technologies that can overcome the limits of radio resource use. The technology and R&D support discovered through the Spectrum Challenge is expected to greatly contribute to the new supply of the 6GHz band and the promotion of the use of the 6GHz band, which is expected to surge in demand in the future. Article body: https://www.news1.kr/articles/?4493568

-

- 작성일 2021-11-29

- 조회수 943

-

- [Research] Professor Woo Simon’s Research Lab (DASH Lab) Publishes Two Papers in Neural Information Processing System (NeurIPS) 2021

- Two papers from DASH Lab (https://dash-lab.github.io/) have been accepted for publication in the 35th Conference on Neural Information Processing System (NeurI PS) 2021 (https://neurips.cc/) Datasets and Benchmarks Track, where NeurIPS is the top-tier conference in machine learning and AI (h5-index: 245 by GoogleScholar)(https://scholar.google.es/citations?view_op=top_venues&hl=en&vq=eng_artificialintelligence). Paper 1. VFP290K: A Large-Scale Benchmark Dataset for Vision-based Fallen Person Detection Jaeju An*, Jeongho Kim*, Hanbeen Lee, Jinbeom Kim, Junhyung Kang, Minha Kim, Saebyeol Shin, Minha Kim, Donghee Hong, and Simon S. Woo Abstract: Detection of fallen persons due to, for example, health problems, violence, or accidents, is a critical challenge. Accordingly, detection of these anomalous events is of paramount importance for a number of applications, including but not limited to CCTV surveillance, security, and health care. Given that many detection systems rely on a comprehensive dataset comprising fallen person images collected under diverse environments and in various situations is crucial. However, existing datasets are limited to only specific environmental conditions and lack diversity. To address the above challenges and help researchers develop more robust detection systems, we create a novel, large-scale dataset for the detection of fallen persons composed of fallen person images collected in various real-world scenarios, with the support of the South Korean government. Our Vision-based Fallen Person (VFP290K) dataset consists of 294,714 frames of fallen persons extracted from 178 videos, including 131 scenes in 49 locations. We empirically demonstrate the effectiveness of the features through extensive experiments analyzing the performance shift based on object detection models. In addition, we evaluate our VFP290K dataset with properly divided versions of our dataset by measuring the performance of fallen person detecting systems. We ranked first in the first round of the anomalous behavior recognition track of AI Grand Challenge 2020, South Korea, using our VFP290K dataset, which can be found here. Our achievement implies the usefulness of our dataset for research on fallen person detection, which can further extend to other applications, such as intelligent CCTV or monitoring systems. The data and more up-to-date information have been provided at our VFP290K site. Paper 2. FakeAVCeleb: A Novel Audio-Video Multimodal Deepfake Dataset Hasam Khalid, Shahroz Tariq, Minha Kim, and Simon S. Woo Abstract: While significant advancements have been made in the generation of deepfakes using deep learning technologies, its misuse is a well-known issue now. Deepfakes can cause severe security and privacy issues as they can be used to impersonate a person's identity in a video by replacing his/her face with another person's face. Recently, a new problem of generating synthesized human voice of a person is emerging, where AI-based deep learning models can synthesize any person's voice requiring just a few seconds of audio. With the emerging threat of impersonation attacks using deepfake audios and videos, a new generation of deepfake detectors is needed to focus on both video and audio collectively. A large amount of good quality datasets is typically required to capture the real-world scenarios to develop a competent deepfake detector. Existing deepfake datasets either contain deepfake videos or audios, which are racially biased as well. Hence, there is a crucial need for creating a good video as well as an audio deepfake dataset, which can be used to detect audio and video deepfake simultaneously. To fill this gap, we propose a novel Audio-Video Deepfake dataset (FakeAVCeleb) that contains not only deepfake videos but also respective synthesized lip-synced fake audios. We generate this dataset using the current most popular deepfake generation methods. We selected real YouTube videos of celebrities with four racial backgrounds (Caucasian, Black, East Asian, and South Asian) to develop a more realistic multimodal dataset that addresses racial bias, and further help develop multimodal deepfake detectors. We performed several experiments using state-of-the-art detection methods to evaluate our deepfake dataset and demonstrate the challenges and usefulness of our multimodal Audio-Video deepfake dataset. Above research has been entirely conducted by students and a professor at SKKU, clearly demonstrating the research competitiveness from SKKU by publishing two papers at the top tier conference.

-

- 작성일 2021-10-21

- 조회수 970

-

- [Research] Professor Woo Simon’s Research Lab Publishes a Paper in The ACMMM 2021

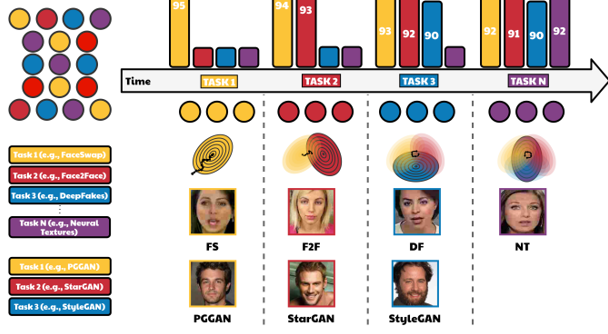

- Research work from Professor Woo and his Data-driven AI Security HCI (DASH Lab) research lab's students Minha Kim and Shahroz Tariq was accepted in top tier multimedia computer science conference, the ACM Multimedia conference (ACMMM 2021) (BK IF=4). The work will be presented in October 2021 in Chengdu, China. In this work, the authors propose a method to detect fake media such as deepfakes and synthetic face images, which have recently emerged as a significant social issue. It covers deep learning-based algorithms such as Continual Learning, Knowledge Distillation, and Representation Learning that can efficiently detect not only previous generation techniques of Deepfakes but also current ones. Previously, while there have been methods to detect deepfake video and GAN-generated images with high performance, new generation techniques of Deepfake and GAN are diversifying. Accordingly, it requires countermeasures to detect these new manipulation techniques while remaining effective on old ones. However, general methods to detect deepfake videos and GAN images require a large amount of training data for each generation technique, which is realistically constrained and also takes a long time to train for the model. The authors proposed a domain-adaptive transfer learning method to address these problems, which requires a small subset of data used for old tasks (source dataset) to prevent knowledge forgetting of the model. However, in practice, long-term preservation is challenging, and retraining source domain data may raise privacy concerns. Therefore, in this work, they developed CoReD algorithm, a continual learning-based approach using representation learning and knowledge distillation. As a result, they demonstrated effective performance improvement for target domains while maintaining detection accuracy on the source domain using CoReD algorithm as compared to other baselines.

-

- 작성일 2021-07-16

- 조회수 898

-

- [Research] Professor Woo Simon’s Research Lab Publishes a Paper in The Web Conference 2021

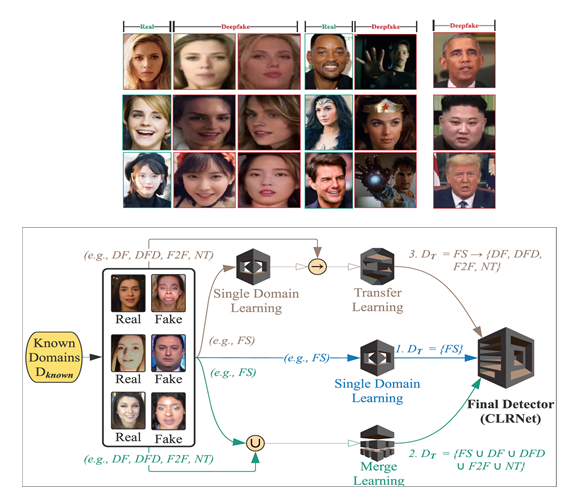

- Professor Woo Simon’s Research Lab Publishes a Paper in The Web Conference 2021 A paper from Professor Woo and his DASH (Data-driven AI Security HCI http://dash.skku.edu/) research lab's Ph.D. students Tariq Shahroz and Sangyup Lee got accepted into one of the top conferences in Web/Data Mining, The Web Conference (WWW) 2021 (BK IF=4). The work will be presented in April. In this work, they propose a deep learning-based (using Convoluntary LSTM, Transfer Learning, and Domain Adaptation techniques) algorithm to detect Deepfakes efficiently. The generation of Deepfakes is susceptible to potential abuse; many people with malicious intentions have taken advantage of these methods to generate fake female celebrity videos. However, detecting these deepfakes or forged images/videos is challenging due to the lack of data. Also, designing a generalized classifier that performs well universally on different types of deepfakes is what we desperately need today. To prevent potential abuse caused by such deepfakes, this work introduces a Convolutional LSTM-based Residual Network (CLRNet), which applies a unique model training procedure and explores spatial and temporal information in deepfakes. The proposed model obtained a performance improvement over state-of-the-art deepfake detection models and validated its performance with the Deepfake-in-the-wild dataset. [Title] “One Detector to Rule Them All: Towards a General Deepfake Attack Detection Framework”, The Web Conference 2021 (WWW 2021) Deep learning-based video manipulation methods have become widely accessible to the masses. With little to no effort, people can quickly learn how to generate deepfake (DF) videos. In particular, females have been occasional victims of deepfake, which are widely spread on the Web. While deep learning-based detection methods have been proposed to identify specific types of DFs, their performance suffers for other types of deepfake methods, including real-world deepfakes, on which they are not sufficiently trained. In other words, most of the proposed deep learning-based detection methods lack transferability and generalizability. Beyond detecting a single type of DF from benchmark deepfake datasets, we focus on developing a generalized approach to detect multiple types of DFs, including deepfakes from unknown generation methods such as DeepFake-in-the-Wild (DFW) videos. To better cope with unknown and unseen deepfakes, we introduce a Convolutional LSTM-based Residual Network (CLRNet), which adopts a unique model training strategy and explores spatial as well as the temporal information in a deepfakes. Through extensive experiments, we show that existing defense methods are not ready for real-world deployment. Whereas our defense method (CLRNet) achieves far better generalization when detecting various benchmark deepfake methods (97.57% on average). Furthermore, we evaluate our approach with a high-quality DeepFake-in-the-Wild dataset, collected from the Internet containing numerous videos and having more than 150,000 frames. Our CLRNet model demonstrated that it generalizes well against high-quality DFW videos by achieving 93.86% detection accuracy, outperforming existing state-of-the-art defense methods by a considerable margin.

-

- 작성일 2021-01-25

- 조회수 957

-

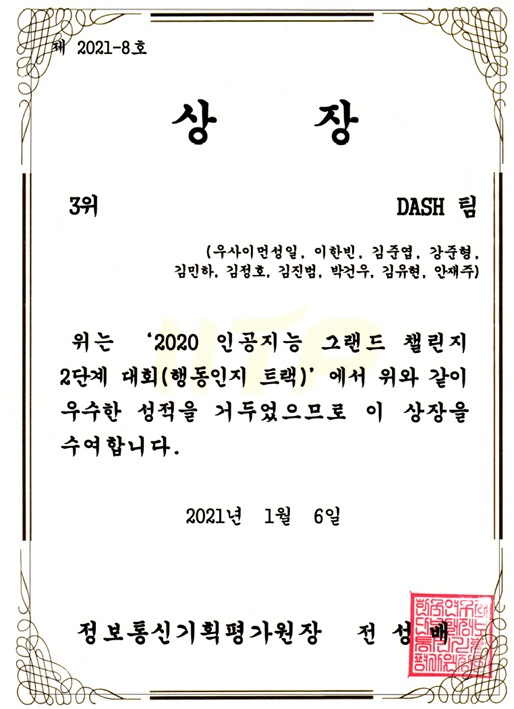

- [Research] Prof. Simon Woo’s DASH Lab won the 3rd place in 2020 National Grand AI Challenge Competition by IITP

- [Data-driven Security HCI Lab(DASH LAB): Won the 3rd plan in 2020 National Grand AI Challenge Competition, Advisor: Prof. Simon Woo] DASH LAB (https://dash-lab.github.io) led by Prof. Simon Woo in Software/Applied Data Science Dept won the 3rd place as well as follow-up research grant in 2020 National Grand AI Challenge occurred in last December 2020, sponsored by IITP. Team members including Hanbeen Lee (MS student in AI dept), JoonHyung Kang (MS student in AI dept), Junyaup Kim (MS student in CS dept), Minha Kim (MS student in CS dept), Jaeju An (MS student in CS dept), Jenongho Kim (Senior in CS dept), Jinbeom Kim (Senior in CS dept), Gunwoo Park (Sophomore in CS dept), and Yoohyun Kim (Sophomore in CS dept) made tremendous efforts to prepare for the national AI competition for several months and finally won the 3rd place. They developed the novel light-weight deep learning-based objection detection mechanism to effectively detect anomalies, ‘fall down events’ in CCTV videos. The competition was very challenging because there were limited number of opportunity to submit the models, and it was required to collect a large amount of training set. Also, the model had to be light enough to run on the GPU inference server. DASH team’s achievement is outstanding given that the majority of participants and winners are from the industries that have a huge computing power as well as manpower. There are very few universities won the competition. DASH team will compete again this year, for the more complex challenge that requires the object detection algorithm to be run on the small edge device such as NVIDA Jetson Nano. Congrats for the hard-working team members!

-

- 작성일 2021-01-21

- 조회수 909

-

- [Research] Sang-ik Hyun, a Master's Program Student, Publishes a Paper on the ECCV 2020 International Conference

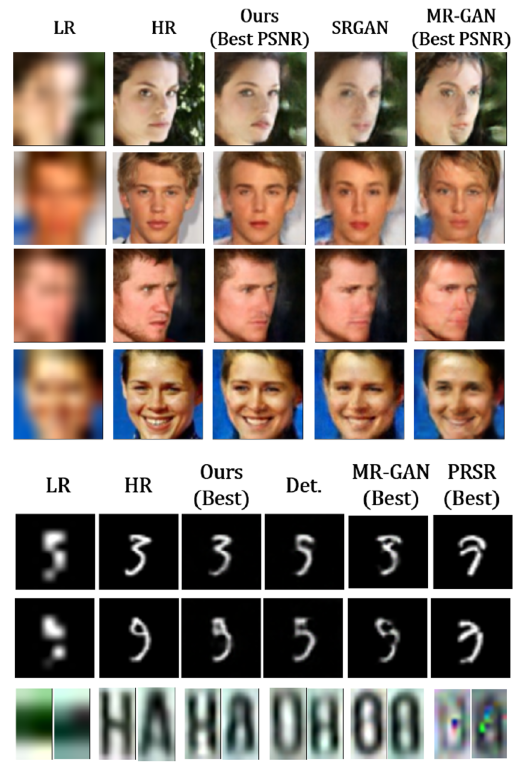

- Sang-ik Hyun, a Master's Program Student in the Research Lab of Prof. Jae-pil Heo, Publishes a Paper on the ECCV 2020 International Conference Hyun Sang-ik (first year of his master's degree program in Artificial Intelligence, Supervisor Jae-pil Heo) published a paper titled "VARSR: Variant Super-Resolution Network for Very Low Resolution Images" in the European Conference on Computer Vision (ECCV) 2020. ECCV is a top-tier academic conference in the field of computer vision, and this paper is the result of research conducted by Hyun Sang-ik when he was an undergraduate researcher. In this study, we presented a deep learning model that performs super-resolution of extremely low resolution images. If existing ultra-resolution technologies assumed a 1:1 low resolution-high resolution image mapping relationship, the VarSR Network in this paper was designed to produce variable results by modeling a 1:N mapping relationship in which a single low-resolution image could correspond to multiple high-resolution images. The proposed model can be used in a variety of real-world applications, including the identification of ultra-low resolution faces and license plate images. Sangeek Hyun and Jae-Pil Heo, “VarSR: Variational Super-Resolution Network for Very Low Resolution Images”, European Conference on Computer Vision (ECCV), 2020. Abstract: As is well known, single image super-resolution (SR) is an ill-posed problem where multiple high resolution (HR) images can be matched to one low resolution (LR) image due to the difference in their representation capabilities. Such many-to-one nature is particularly magnified when super-resolving with large upscaling factors from very low dimensional domains such as 8x8 resolution where detailed information of HR is hardly discovered. Most existing methods are optimized for deterministic generation of SR images under pre-defined objectives such as pixel-level reconstruction and thus limited to the one-to-one correspondence between LR and SR images against the nature. In this paper, we propose VarSR, Variational Super Resolution Network, that matches latent distributions of LR and HR images to recover the missing details. Specifically, we draw samples from the learned common latent distribution of LR and HR to generate diverse SR images as the many-to-one relationship. Experimental results validate that our method can produce more accurate and perceptually plausible SR images from very low resolutions compared to the deterministic techniques.

-

- 작성일 2020-10-06

- 조회수 1087

-

- [Research] DASH Lab participated in the AI Grand Challenge and won the first place

- A master's degree and undergraduate researchers from the Data-driven AI Security HCI Lab (DASH Lab) participated in the AI Grand Challenge organized by IITP(the Institute for Information and Communication Technology Promotion) and won the first place. AI Grand Challenge is a challenging and competitive R&D competition in which participants compete by developing algorithms that address the challenging project. The "2020 AI Grand Challenge" will be held as four stages for three years until 2022 with four tracks, including various emergency situations (behavioral cognition), violence (voice recognition), classification of daily waste (object recognition), and reduction of power consumption through artificial intelligence optimization. The goal of this challenge is to create a convenient and safe living environment through artificial intelligence technology. Professor Simon Woo’s research team participated in the behavioral recognition track (track 1) and won the first place by developing an algorithm that recognizes abnormal behavior of emergency patients based on image analysis. The AI Grand Challenge, which marks its fourth competition this year, will be held as four stages until 2022. This year, a total of 134 teams, including universities, research institutes, and companies, and 566 people participated in the first stage competition, and five excellent research teams were selected for each track. A total of 20 outstanding research teams will participate in the second stage competition, which will be held offline in November this year, with additional 200 million won (4 billion won in total) research funds. In the second stage of the competition, graduate students from the Department of Computer Engineering (Joon-yeop Jeon, So-won Jeon, etc.) undergraduate researcher (Jung-ho Kim, Gun-woo Park, and Hee-sung Kim, etc.) and students from the Department of Applied Artificial Intelligence and the Department of Data Science will participate. http://www.ai-challenge.kr/sub03/view/id/43

-

- 작성일 2020-09-24

- 조회수 864

-

- [Research] Graduate School of Convergence Security Track, Building Cyber Security Alliance

- Sungkyunkwan University Graduate School of Convergence Security Track, Building Cyber Security Alliance with 14 Universities Around the World Sungkyunkwan University Graduate School of Convergence Security Track aims to train convergence security experts in digital healthcare. Professor Hyung-kee Choi said, "We will lead the field of medical health convergence security with the objective of Creative N.E.X.T.” Sungkyunkwan University, which boasts a 622-year history, is drawing a vision to become a global leading university through this year's "VISION2020+”. It aims to become a global hub university for knowledge and talent networks that will secure the best reputation domestically and internationally by creating world-class education and research achievements. As part of this, Sungkyunkwan University plans to promote "research and talent training in the cyber security field" more systematically and professionally, as SKKU was selected as the "Convergence Security Core Talent Training Project" in April 2020. Sungkyunkwan University Graduate School of Convergence Security Track is preparing for the 2021 freshman year and curriculum that will foster convergence security professionals in the "digital health field”. We have formed the best teaching staffs for digital healthcare convergence security lectures, research and practice. Details are as below. - Seven full-time professors who specialized in security such as software security, embedded vulnerability analysis, user-centered security, and artificial intelligence security - Three full-time digital healthcare information security professors - Two industry-academic cooperation professors will be in charge of industry-academic cooperation The head professor Hyung-kee Choi said “The global biohealth industry, which was worth 1.9 trillion won in 2015, is expected to grow even more by 3.23 trillion won in 2025, and the digital healthcare sector will be driven as a major industry.” He also explained about digital healthcare field by saying “As seen in the ongoing COVID-19 situation, the untact and on-tack environment are now not an option. The smarting of the medical and health sectors will further be accelerated in addition to the needs of the era of rapid progress in the fourth industrial revolution or aging population.” In addition, Prof. Hyung-kee Choi emphasized that all medical health devices or devices linked to services expanding on top of the 5G connection will eventually face unprecedented security challenges, and the IT infrastructure of medical institutions and homes will desperately need security technologies to protect sensitive medical data. He added, "In order to cultivate professional technologies and talents that will solve security difficulties in the field of critical digital healthcare, SKKU will cooperate with 25 corporations including Cisco and Ahnlab, 14 international universities including Stanford and USC, and 3 research institutes, and medical institutions. Additionally, SKKU will run industrial activities, co-research, and talent exchanges.” Sungkyunkwan University Graduate School of Convergence Security Track, which selects a total of 15 students through regular and occasional recruitment, will operate as a curriculum that reflects the on-site demand of 25 consortia, and plans to cultivate talented people who will lead the medical health convergence security field with the educational goal of "Creative N.E.X.T." In particular, Sungkyunkwan University's Graduate School of Convergence Security Track aims to foster experts in 'Convergence Security Technology Development' so that it aims to improve development capabilities that interpret security needs in digital healthcare domains and develop optimal convergence security technologies to solve problems, rather than simply combining different elements. Moreover, practical education regarding information security will be provided so that they can act as convergence security experts not only in digital healthcare domains but also in other domains. "The Graduate School of Convergence Security Track at Sungkyunkwan University has established Cyber Security Alliance with 14 foreign universities to have a global platform that allows them to research and exchange lectures, and has differentiated strengths that no other graduate school can compete, including collaboration with on-campus graduate schools such as AI, Interaction Science, and Big Data graduate schools, and cooperation with the Samsung Hospital and medical schools." In particular, Professor Hyung-kee Choi emphasized that in line with the era of the 4th Industrial Revolution and the aging population, increasingly smart medical devices are facing unprecedented security problems due to services expanded based on 5G, and thus the IT infrastructure of medical institutions is in dire need of security technologies to protect sensitive data and safety. For this reason, it is necessary to develop core security source technologies in the "un-act" era that can meet the needs of industries that urgently need convergence security and open them with a sense of security. And this is the path for Sungkyunkwan University Graduate School of Convergence Security Track. "We're getting our first freshman year of 2021. To this end, we are gathering the capabilities of our professors in preparation for the selection and development of outstanding freshmen. In addition, we will continue to develop into a graduate school that grows after the completion of the Graduate School of Convergence Security Track Project.” https://www.boannews.com/media/view.asp?idx=90313&kind=

-

- 작성일 2020-09-23

- 조회수 1169

-

- [Research] Professors Park Eun-il and Han Jin-Young’s Research Team at the College of Software Research Way to Diagnose and Respond to Mental Illness Through Social Media

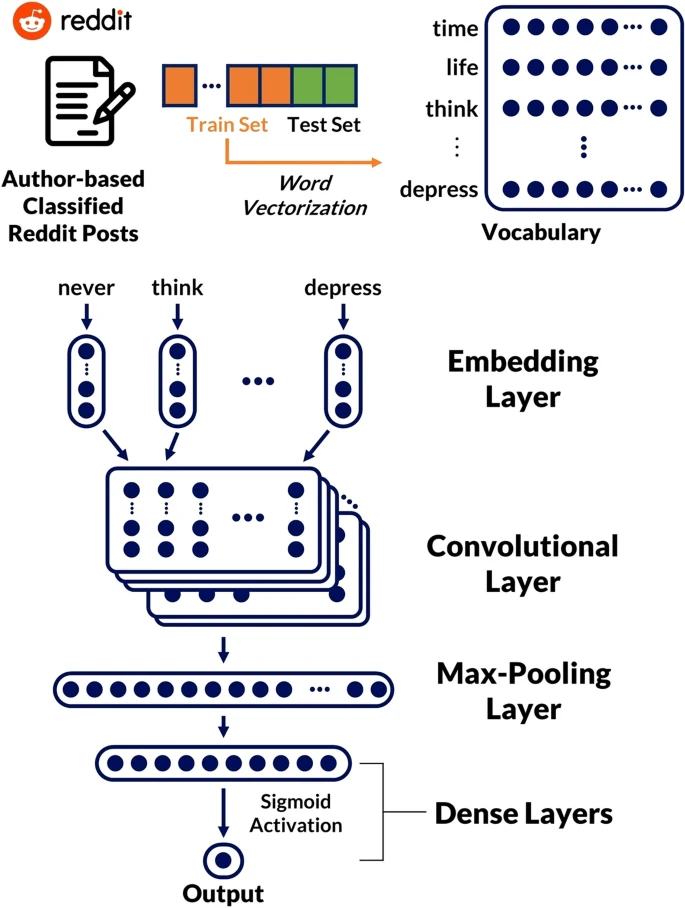

- Professors Park Eun-il and Han Jin-Young’s Research Team at the College of Software Research Way to Diagnose and Respond to Mental Illness Through Social Media Professors Park Eun-il and Han Jin-young research team at the College of Software (with master's course student Kim Ji-na and master’s/doctor integrated course student Lee Ji-eon) announced that they published a paper in the July issue of Scientific Reports entitled “Deep Learning Model for Predicting Mental Diseases through Social Media”. Through their research, the team introduced deep learning-based artificial intelligence models to diagnose mental illness early and respond to it and suggested the direction of future research. The research team developed a deep learning model that identifies various mental illnesses based on posts written by social media users to share their feelings. The artificial intelligence model presented an innovative result in identifying the mental disorders (e.g., depression, anxiety, bipolar disorder, schizophrenia, etc.) the users who wrote the posts were associated with. The research team used 633,385 Reddit posts, one of the largest social media platforms, and utilized deep learning classification models based on convolutional neural networks. Over 96% of those with the Autism Spectrum Disorder and a minimum of 75% with other mental disorders can be detected by the model. Convolutional Neural Network-Based Classification Model Structure The collected posts were expressed as a corpus to vectors using Word2vec, a representative vocabulary embedding technique, and a mental classification model was designed using the convolutional neural network. “Mental illness has recently emerged as a new social problem,” the research team said, “Utilizing the social media data created by users will greatly help us predict and treat mental illness early.” The research was conducted under strict management through IRB approval procedures, in consideration of the recent ethical debate over the utilization of big data in the information age. Based on this research, in the future the research team will develop a deep learning model that predicts potential mental illness using Korean text.

-

- 작성일 2020-08-18

- 조회수 843

- About Us

- Undergraduate

-

Graduate

- Department of Computer Science and Engineering

- Department of Artificial Intelligence

- School of Convergence Security

- Department of Intelligent Software

- Department of AI system Engineering

- Department of Interaction Science

- Department of Applied Data Science

- Department of Applied Artificial Intelligence

- Department of Immersive Media Engineering

- Department of Smart Factory Convergence

- Department of Human-Artificial Intelligence Interaction (Jointy Managed)

- Department of Electrical and Computer Engineering (Jointy Managed)

- Department of DMC Engineering (Jointy Managed)

- Department of Semiconductor and Display Engineering (Jointy Managed)

- Professors

- Research

- Student

- Campus Life