[Research] Professor Woo Simon’s Research Lab Publishes a Paper in The AAAI 2022

- College of Software and Engineering

- Hit1039

- 2021-12-07

Research work from Professor Simon S. Woo and his Data-driven AI Security HCI (DASH Lab) research lab's student Binh M. Le was accepted in top tier multimedia computer science conference, Thirty-Sixth AAAI Conference on Artificial Intelligence, (Acceptance Rate = 15%, BK IF = 4). The work will be presented in February 2022 in Vancouver, Canada.

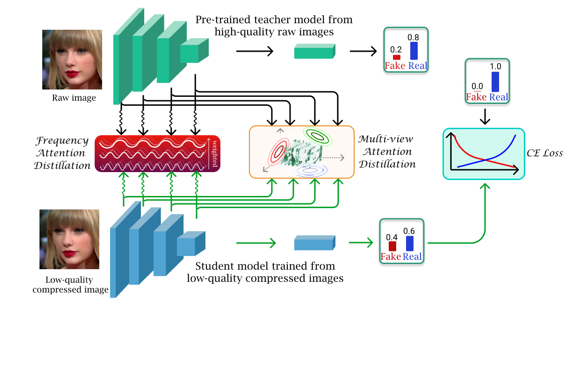

In this work, the authors propose a novel method to detect low-quality compressed deepfake images. It utilized the theories of Optimal Transportation, Frequency Domain learning, and Knowledge Distillation to transfer representations from a teacher model that is pre-trained on high-quality images to a student model that is trained to detect low-quality compressed images.

The authors argue that low-quality images bring two main challenges for a detection model: the loss of high-frequency information and the loss of correlation in a compressed image. Thereafter, they proposed a novel Attention-based Deepfake detection Distillations, exploring frequency attention distillation and multi-view attention distillation in a Knowledge Distillation (KD) framework to detect highly compressed deepfakes. The frequency attention helps the student to retrieve and focus more on high-frequency components from the teacher. The multi-view attention, inspired by Sliced Wasserstein distance, pushes the student's output tensor distribution toward the teacher's, maintaining correlated pixel features between tensor elements from multiple views.

In the experiment, the authors using different benchmark datasets and validate the effectiveness of their proposed method in comparing with many previous state-of-the-art detection models.