[Research] Professor Simon Woo’s DASH Lab publishes two papers in The Web Conference (WWW) 2022

- College of Software and Engineering

- Hit1118

- 2022-02-03

Professor Simon S. Woo’s DASH Lab publishes two papers at the top tier web/data mining computer science conference, The Web Conference (WWW) on April 2022 in Lyon, France.

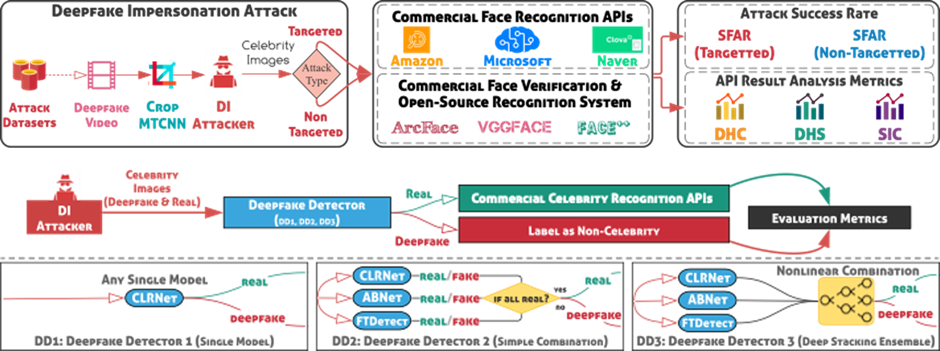

Paper 1. “Am I a Real or Fake Celebrity? Evaluating Face Recognition and Verification APIs under Deepfake Impersonation Attack” (Shahroz Tariq, Sowon Jeon, and Simon S. Woo*)

Abstract: Recent advancements in web-based multimedia technologies, such as face recognition web services powered by deep learning, have been significant. As a result, companies such as Microsoft, Amazon, and Naver provide highly accurate commercial face recognition web services for a variety of multimedia applications. Naturally, such technologies face persistent threats, as virtually anyone with access to deepfakes can quickly launch impersonation attacks. These attacks pose a serious threat to authentication services, which rely heavily on the performance of their underlying face recognition technologies. Despite its gravity, deepfake abuse involving commercial web services and their robustness have not been thoroughly measured and investigated. By conducting a case study on celebrity face recognition, we examine the robustness of black-box commercial face recognition web APIs (Microsoft, Amazon, Naver, and Face++) and open-source tools (VGGFace and ArcFace) against Deepfake Impersonation (DI) attacks. While the majority of APIs do not make specific claims of deepfake robustness, we find that authentication mechanisms may get built one top of them, nonetheless. We demonstrate the vulnerability of face recognition technologies to DI attacks, achieving respective success rates of 78.0% for targeted (TA) attacks; we also propose mitigation strategies, lowering respective attack success rates to as low as 1.26% for TA attacks with adversarial training.

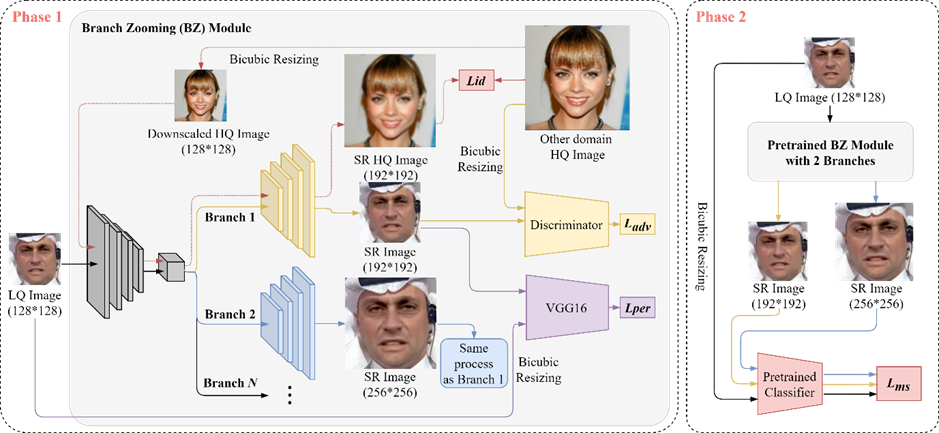

Paper #2. BZNet: Unsupervised Multi-scale Branch Zooming Network for Detecting Low-quality Deepfake Videos (Sangyup Lee, Jaeju An, and Simon S. Woo*)

In this work, authors propose multi-scale Branch Zooming Network (BZNet), a novel method to detect low-quality (LQ) Deepfakes. In real-world scenarios, Deepfake videos are compressed to low-quality (LQ) videos, taking up less storage space and facilitating dissemination through the web and social media. Such LQ DF videos are much more challenging to detect than high-quality (HQ) DF videos. To address this challenge, the authors rethink the design of standard deep learning-based DF detectors, specifically exploiting feature extraction to enhance the features of LQ images.

The BZNet adopts an unsupervised super-resolution (SR) technique and utilizes multi-scale images for training. The authors train the BZNet only using highly compressed LQ images and experiment under a realistic setting, where HQ training data are not readily accessible. Extensive experiments on multiple Deepfake datasets demonstrate that the BZNet architecture improves the detection accuracy of existing CNN-based classifiers, outperforming the state-of-the-art Deepfake detection methods. They also suggest that multi-scale learning process has the potential to push the limitations of existing CNN-based classifiers and achieve comparable results on similar low quality vision tasks.

Please contact Simon Woo (swoo@g.skku.edu) for any question on the above research.