-

- [Research] [Research] A research paper of Professor Lee Sang-won's lab (Lee Bo-hyun's master's course) is approved by the VLDB 2023

- VLDB Laboratory (Advisory Professor: Lee Sang-won) Master's Program, Dr. Ahn Mi-jin (graduated) "LRU-C: Parallelizing Database I/Os for Flash SSDs" has been approved for publication in the 49th International Conference on Very Large Data Bases (VLDB). The VLDB is a top-tier academic conference in the field of databases and is held in Vancouver, Canada. [Research contents] Traditional database buffer managers serialize I/O requests due to readstall and mutex conflicts. Serialized I/O reduces storage and CPU utilization, limiting transaction throughput and latency. This damage is noticeable in flash SSDs with asymmetric read-write speeds and rich I/O parallelism. In this work, we propose a novel approach to database buffering, LRU-C method, to leverage parallelization of flash SSDs by requesting database I/O in parallel. Introduces an LRU-C pointer to the most recently deprecated clean page in the LRU list. If you miss the page, LRU-C selects the current LRU clean page as vitim and adjusts the pointer to the next LRU Clean page on the LRU list. In this way, LRU-C can prevent I/O serialization due to readstall. The LRU-C pointer proposes two optimizations: dynamic batch write and parallel LRU list management to improve I/O throughput. The former can flush more dirty pages at once, while the latter mitigates I/O serialization caused by two mutex. Running OLTP workloads using the MySQL-based LRU-C prototype on flash SSDs resulted in a 3x and 1.5x improvement in transaction throughput and a significant reduction in tail latency over Vanilla MySQL and state-of-the-art WAR solutions, respectively. LRU-C reduces the hit ratio slightly, but increases I/O throughput, which significantly offsets the drop in the hit ratio.

-

- 작성일 2023-05-04

- 조회수 1034

-

- [Student] 2022 Semester 2 College of Computing and Informatics Thesis/ Project Presentation

- 2022 Semester 2 College of Computing and Informatics Thesis/ Project Presentation On November 23rd (Wed), presentation of Thesis/ Project in the College of Computing and Informatics for the 2022-2 semester was held in the semiconductor building lobby and 330110. In addition, students' Thesis and project were posted on the online homepage. (https://cs.skku.edu/gp) A total of 100 people posted and presented Thesis and project studied for a year, and students and professors attended together to evaluate and win research contents and wrap up the year. The winners were awarded the (금상 : 박형준 / 은상 : 강병남, 박소영 / 동상 : 김용환, 전윤태, 김민제), 우수발표상(황준원, 최시열) Once again, thank you for the hard work of the students who completed their Thesis and project well.

-

- 작성일 2022-11-29

- 조회수 600

-

- [Research] Prof. Simon Woo’s DASH Lab publishes 5 full conference papers at CIKM 2022

- DASH Lab (https://dash-lab.github.io/) led by Prof. Simon Woo publishes 5 full conference papers at CIKM 2022 (BK IF=3). Research with Korea Aerospace Research Institute (KARI) for predicting satellite system anomaly and orbit prediction Research on neural networks pruning Joint research with Univ. of Southern California (USC) in US to detect malicious contents for kids on YouTube videos Joint research with CSIRO Data61 in Australia for adversarial attacks on time series data Research on novel Self-KD to improve downstream CV tasks Thanks to the students who did exceptional work!!! 1. Youjin Shin, Eun-Ju Park, Simon S. Woo, Okchul Jung and Daewon Chung, ”Selective Tensorized Multi-layer LSTM for Orbit Prediction”, Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 2022. Although the collision of space objects not only incurs a high cost but also threatens human life, the risk of collision between satellites has increased, as the number of satellites has rapidly grown due to the significant interests in many space applications. However, it is not trivial to monitor the behavior of the satellite in real-time since the communication between the ground station and spacecraft are dynamic and sparse, and there is an increased latency due to the long distance. Accordingly, it is strongly required to predict the orbit of a satellite to prevent unexpected contingencies such as a collision. Therefore, the real-time monitoring and accurate orbit prediction is required. Furthermore, it is necessarily to compress the prediction model, while achieving a high prediction performance in order to be deployable in the real systems. Although several machine learning and deep learning-based prediction approaches have been studied to address such issues, most of them have applied only basic machine learning models for orbit prediction without considering the size, running time, and complexity of the prediction model. In this research, we propose Selective Tensorized multi-layer LSTM (ST-LSTM) for orbit prediction, which not only improves the orbit prediction performance but also compresses the size of the model that can be applied in practical deployable scenarios. To evaluate our model, we use the real orbit dataset collected from the Korea Multi-Purpose Satellites (KOMPSAT-3 and KOMPSAT-3A) of the Korea Aerospace Research Institute (KARI) for 5 years. In addition, we compare our ST-LSTM to other machine learning-based regression models, LSTM, and basic tensorized LSTM models with regard to the prediction performance, model compression rate, and running time. 2. Gwanghan Lee, Saebyeol Shin, and Simon S. Woo, ”Accelerating CNN via Dynamic Pattern‑based Pruning Network”, Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 2022. Most dynamic pruning methods fail to achieve actual acceleration due to the extra overheads caused by indexing and weight-copying to implement the dynamic sparse patterns for every input sample. To address this issue, we propose Dynamic Pattern-based Pruning Network, which preserves the advantages of both static and dynamic networks. Unlike previous dynamic pruning methods, our novel method dynamically fuses static kernel patterns, enhancing the kernel's representational power without additional overhead. Moreover, our dynamic sparse pattern enables an efficient process using BLAS libraries, accomplishing actual acceleration. We demonstrate the effectiveness of the proposed network on CIFAR and ImageNet, outperforming the state-of-the-art methods achieving better accuracy with lower computational cost. 3. Binh M. Le, Rajat Tandon, Chingis Oinar, Jeffrey Liu, Uma Durairaj, Jiani Guo, Spencer Zahabizadeh, Sanjana Ilango, Jeremy Tang, Fred Morstatter, Simon Woo and Jelena Mirkovic, ”Samba: Identifying Inappropriate Videos for Young Children on YouTube”, Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 2022. In this paper, we propose a fusion model, called Samba, which uses both metadata and video subtitles for content classifying YouTube videos for kids. Previous studies utilized metadata, such as video thumbnails, title, comments, ect., for detecting inappropriate videos for young viewers. Such metadata-based approaches achieve high accuracy but still have significant misclassifications due to the reliability of input features. By adding representation features from subtitles, which are pretrained with a self-supervised contrastive framework, our Samba model can outperform other state-of-the-art classifiers by at least 7%. We also publish a large-scale, comprehensive dataset of 70K videos for future studies. 4. Shahroz Tariq, Binh M. Le and Simon Woo, ”Towards an Awareness of Time Series Anomaly Detection Models' Adversarial Vulnerability”, Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 2022. Time series anomaly detection is studied in statistics, ecology, and computer science. Numerous time series anomaly detection strategies have been presented utilizing deep learning. Many of these methods exhibit state-of-the-art performance on benchmark datasets, giving the false impression that they are robust and deployable in a wide variety of real-world scenarios. In this study, we demonstrate that adding modest adversarial perturbations to sensor data severely weakens anomaly detection systems. Under well-known adversarial attacks such as Fast Gradient Sign Method (FGSM) and Projected Gradient Descent (PGD), we demonstrate that the performance of state-of-the-art deep neural networks (DNNs) and graph neural networks (GNNs), which claim to be robust against anomalies and possibly be used in real-world systems, drops to 0%. We demonstrate for the first time, to our knowledge, the vulnerability of anomaly detection systems to adversarial attacks. This study aims to increase awareness of the adversarial vulnerabilities of time series anomaly detectors. 5. Hanbeen Lee, Jeongho Kim and Simon Woo, “Sliding Cross Entropy for Self-Knowledge Distillation”, Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 2022. Knowledge distillation (KD) is a powerful technique for improving the performance of a small model by leveraging the knowledge of a larger model. Despite its remarkable performance boost, KD has a drawback with the substantial computational cost of pre-training larger models in advance. Recently, a method called self-knowledge distillation has emerged to improve the model's performance without any supervision. In this paper, we present a novel plug-in approach called Sliding Cross Entropy (SCE) method, which can be combined with existing self-knowledge distillation to significantly improve the performance. Specifically, to minimize the difference between the output of the model and the soft target obtained by self-distillation, we split each softmax representation by a certain window size, and reduce the distance between sliced parts. Through this approach, the model evenly considers all the inter-class relationships of a soft target during optimization. The extensive experiments show that our approach is effective in various tasks, including classification, object detection, and semantic segmentation. We also demonstrate SCE consistently outperforms existing baseline methods.

-

- 작성일 2022-08-25

- 조회수 1098

-

- [Research] Professor Simon Woo’s DASH Lab publishes two papers in The Web Conference (WWW) 2022

- Professor Simon S. Woo’s DASH Lab publishes two papers at the top tier web/data mining computer science conference, The Web Conference (WWW) on April 2022 in Lyon, France. Paper 1. “Am I a Real or Fake Celebrity? Evaluating Face Recognition and Verification APIs under Deepfake Impersonation Attack” (Shahroz Tariq, Sowon Jeon, and Simon S. Woo*) Abstract: Recent advancements in web-based multimedia technologies, such as face recognition web services powered by deep learning, have been significant. As a result, companies such as Microsoft, Amazon, and Naver provide highly accurate commercial face recognition web services for a variety of multimedia applications. Naturally, such technologies face persistent threats, as virtually anyone with access to deepfakes can quickly launch impersonation attacks. These attacks pose a serious threat to authentication services, which rely heavily on the performance of their underlying face recognition technologies. Despite its gravity, deepfake abuse involving commercial web services and their robustness have not been thoroughly measured and investigated. By conducting a case study on celebrity face recognition, we examine the robustness of black-box commercial face recognition web APIs (Microsoft, Amazon, Naver, and Face++) and open-source tools (VGGFace and ArcFace) against Deepfake Impersonation (DI) attacks. While the majority of APIs do not make specific claims of deepfake robustness, we find that authentication mechanisms may get built one top of them, nonetheless. We demonstrate the vulnerability of face recognition technologies to DI attacks, achieving respective success rates of 78.0% for targeted (TA) attacks; we also propose mitigation strategies, lowering respective attack success rates to as low as 1.26% for TA attacks with adversarial training. Paper #2. BZNet: Unsupervised Multi-scale Branch Zooming Network for Detecting Low-quality Deepfake Videos (Sangyup Lee, Jaeju An, and Simon S. Woo*) In this work, authors propose multi-scale Branch Zooming Network (BZNet), a novel method to detect low-quality (LQ) Deepfakes. In real-world scenarios, Deepfake videos are compressed to low-quality (LQ) videos, taking up less storage space and facilitating dissemination through the web and social media. Such LQ DF videos are much more challenging to detect than high-quality (HQ) DF videos. To address this challenge, the authors rethink the design of standard deep learning-based DF detectors, specifically exploiting feature extraction to enhance the features of LQ images. The BZNet adopts an unsupervised super-resolution (SR) technique and utilizes multi-scale images for training. The authors train the BZNet only using highly compressed LQ images and experiment under a realistic setting, where HQ training data are not readily accessible. Extensive experiments on multiple Deepfake datasets demonstrate that the BZNet architecture improves the detection accuracy of existing CNN-based classifiers, outperforming the state-of-the-art Deepfake detection methods. They also suggest that multi-scale learning process has the potential to push the limitations of existing CNN-based classifiers and achieve comparable results on similar low quality vision tasks. Please contact Simon Woo (swoo@g.skku.edu) for any question on the above research.

-

- 작성일 2022-02-03

- 조회수 1124

-

- [Research] Professor Woo Simon’s Research Lab Publishes a Paper in The AAAI 2022

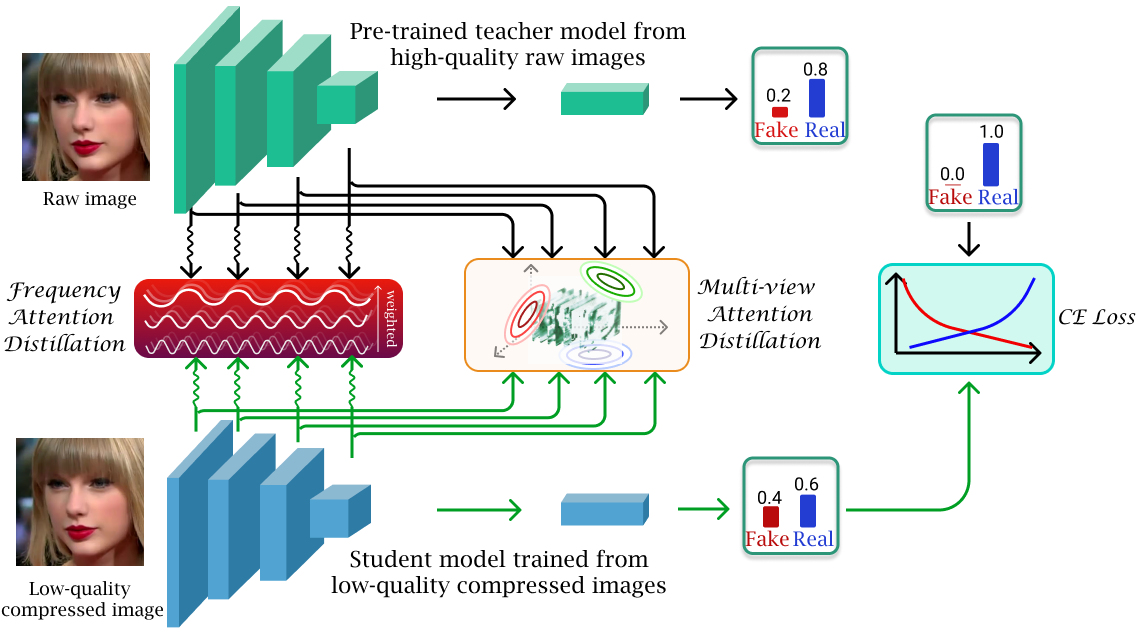

- Research work from Professor Simon S. Woo and his Data-driven AI Security HCI (DASH Lab) research lab's student Binh M. Le was accepted in top tier multimedia computer science conference, Thirty-Sixth AAAI Conference on Artificial Intelligence, (Acceptance Rate = 15%, BK IF = 4). The work will be presented in February 2022 in Vancouver, Canada. In this work, the authors propose a novel method to detect low-quality compressed deepfake images. It utilized the theories of Optimal Transportation, Frequency Domain learning, and Knowledge Distillation to transfer representations from a teacher model that is pre-trained on high-quality images to a student model that is trained to detect low-quality compressed images. The authors argue that low-quality images bring two main challenges for a detection model: the loss of high-frequency information and the loss of correlation in a compressed image. Thereafter, they proposed a novel Attention-based Deepfake detection Distillations, exploring frequency attention distillation and multi-view attention distillation in a Knowledge Distillation (KD) framework to detect highly compressed deepfakes. The frequency attention helps the student to retrieve and focus more on high-frequency components from the teacher. The multi-view attention, inspired by Sliced Wasserstein distance, pushes the student's output tensor distribution toward the teacher's, maintaining correlated pixel features between tensor elements from multiple views. In the experiment, the authors using different benchmark datasets and validate the effectiveness of their proposed method in comparing with many previous state-of-the-art detection models.

-

- 작성일 2021-12-07

- 조회수 1006

-

- [Research] Prof. Yuseong Kim's Lab/Spectrum Challenge Awarded 1st Prize

- The Electronics and Telecommunications Research Institute (ETRI) held the Spectrum Challenge to research and develop core technologies using radio waves that enable various new wireless services to coexist. In radio wave utilization improvement technology, eight university teams competed with the theme of 'Finding an efficient communication method using reinforcement learning in a multi-frequency channel sharing network environment.' CSI Lab is composed of Sungkyunkwan University Ph.D. Jung-in Park, and Professor Yoo-Seong Kim. (Computer Systems and Intelligence Lab) the team won 1st place. CSI Lab won first place in the 2020 Spectrum Challenge, achieving the feat of winning first place for two consecutive years. The Spectrum Challenge is a foundation for actively responding to paradigm shifts in the global use of frequencies, a key resource in the 4th industrial revolution and constituting a hyper-connected society, and conducting the spearheading mission of developing core technologies that can overcome the limits of radio resource use. The technology and R&D support discovered through the Spectrum Challenge is expected to greatly contribute to the new supply of the 6GHz band and the promotion of the use of the 6GHz band, which is expected to surge in demand in the future. Article body: https://www.news1.kr/articles/?4493568

-

- 작성일 2021-11-29

- 조회수 943

-

- [Research] Professor Woo Simon’s Research Lab (DASH Lab) Publishes Two Papers in Neural Information Processing System (NeurIPS) 2021

- Two papers from DASH Lab (https://dash-lab.github.io/) have been accepted for publication in the 35th Conference on Neural Information Processing System (NeurI PS) 2021 (https://neurips.cc/) Datasets and Benchmarks Track, where NeurIPS is the top-tier conference in machine learning and AI (h5-index: 245 by GoogleScholar)(https://scholar.google.es/citations?view_op=top_venues&hl=en&vq=eng_artificialintelligence). Paper 1. VFP290K: A Large-Scale Benchmark Dataset for Vision-based Fallen Person Detection Jaeju An*, Jeongho Kim*, Hanbeen Lee, Jinbeom Kim, Junhyung Kang, Minha Kim, Saebyeol Shin, Minha Kim, Donghee Hong, and Simon S. Woo Abstract: Detection of fallen persons due to, for example, health problems, violence, or accidents, is a critical challenge. Accordingly, detection of these anomalous events is of paramount importance for a number of applications, including but not limited to CCTV surveillance, security, and health care. Given that many detection systems rely on a comprehensive dataset comprising fallen person images collected under diverse environments and in various situations is crucial. However, existing datasets are limited to only specific environmental conditions and lack diversity. To address the above challenges and help researchers develop more robust detection systems, we create a novel, large-scale dataset for the detection of fallen persons composed of fallen person images collected in various real-world scenarios, with the support of the South Korean government. Our Vision-based Fallen Person (VFP290K) dataset consists of 294,714 frames of fallen persons extracted from 178 videos, including 131 scenes in 49 locations. We empirically demonstrate the effectiveness of the features through extensive experiments analyzing the performance shift based on object detection models. In addition, we evaluate our VFP290K dataset with properly divided versions of our dataset by measuring the performance of fallen person detecting systems. We ranked first in the first round of the anomalous behavior recognition track of AI Grand Challenge 2020, South Korea, using our VFP290K dataset, which can be found here. Our achievement implies the usefulness of our dataset for research on fallen person detection, which can further extend to other applications, such as intelligent CCTV or monitoring systems. The data and more up-to-date information have been provided at our VFP290K site. Paper 2. FakeAVCeleb: A Novel Audio-Video Multimodal Deepfake Dataset Hasam Khalid, Shahroz Tariq, Minha Kim, and Simon S. Woo Abstract: While significant advancements have been made in the generation of deepfakes using deep learning technologies, its misuse is a well-known issue now. Deepfakes can cause severe security and privacy issues as they can be used to impersonate a person's identity in a video by replacing his/her face with another person's face. Recently, a new problem of generating synthesized human voice of a person is emerging, where AI-based deep learning models can synthesize any person's voice requiring just a few seconds of audio. With the emerging threat of impersonation attacks using deepfake audios and videos, a new generation of deepfake detectors is needed to focus on both video and audio collectively. A large amount of good quality datasets is typically required to capture the real-world scenarios to develop a competent deepfake detector. Existing deepfake datasets either contain deepfake videos or audios, which are racially biased as well. Hence, there is a crucial need for creating a good video as well as an audio deepfake dataset, which can be used to detect audio and video deepfake simultaneously. To fill this gap, we propose a novel Audio-Video Deepfake dataset (FakeAVCeleb) that contains not only deepfake videos but also respective synthesized lip-synced fake audios. We generate this dataset using the current most popular deepfake generation methods. We selected real YouTube videos of celebrities with four racial backgrounds (Caucasian, Black, East Asian, and South Asian) to develop a more realistic multimodal dataset that addresses racial bias, and further help develop multimodal deepfake detectors. We performed several experiments using state-of-the-art detection methods to evaluate our deepfake dataset and demonstrate the challenges and usefulness of our multimodal Audio-Video deepfake dataset. Above research has been entirely conducted by students and a professor at SKKU, clearly demonstrating the research competitiveness from SKKU by publishing two papers at the top tier conference.

-

- 작성일 2021-10-21

- 조회수 970

-

- [Student] Final Presentation Results of the Intensive Summer Internship to Customized SW/AI Talent

- - Strengthen strategic partnerships with industry-academic partnerships through student internships. - Establishing a pipeline for corporate innovation by fostering SW and AI-tailored talents. [Figure 1] Dean Eun-Seok Lee of SW Convergence University Our university announced that Software Convergence University (Dean Eun-Seok Lee) operated an industry-academic cooperation summer intensive work program jointly supported by the SW-centered university project group and the LINC+ project group for 40 days from June 22 to August 17. This program is part of the 2020 challenging semester system introduced by Sungkyunkwan University to provide students with opportunities for various learning and experiential activities to achieve student success. A total of 110 students, guidance faculty, and 20 companies, including software, computer engineering, and data science convergence majors, participated in the project and aimed to strengthen core competencies in the △ SW and AI fields, strengthen strategic partnerships with △ industry-academic cooperation companies, and build corporate innovation pipelines. To prevent the spread of COVID-19, SKKU held the "Final Performance Presentation of Summer Intensive Work" online on the 13th, and a total of 21 teams accessed the video conference platform to share the excellence of industry-academic cooperation projects during the 40 days of the Challenge Semester. Accordingly, the faculty evaluation and student vote decided the award-winning team finally on the 20th. In the welcoming speech, Dean Eun-Seok Lee said, "The final presentation of the Intensive Summer Internship was well-completed thanks to the hard work of students, professors, and companies representing Sungkyunkwan University during the Challenge Semester." Furthermore, he said, "I think the industry-academic cooperation project has paved the way for students to lose their fear of companies and widen their horizon. We will do our best to continue the winter and summer internship programs and help these global talents develop based on our understanding of practical experiences and jobs." Joon-young Lee, a third-year software student, presented the theme of "Example-based FAQ System," including, "We expect to provide the ability to ask most of the answers to general questions unrelated to specific servers and accounts, and to achieve better results by specifying dates and visual information in the future." [Figure 2] Internship online WebEx presentation for summer Jung-ho Lee, director of Didim 365 (CEO Min-ho Jang), a cloud management company, said based on "a company that helps customers from the customer's point of view" and "the importance of organic cooperation between industry and academia," he will run field experience programs and conduct employment and academic exchange projects. Finally, Professor Lee Ju-Sik said, "During the five-year industry-academic cooperation project, the participation of students and companies was the most active this year. The industry-academic cooperation summer intensive internship program is a meaningful project to realize a win-win development model of industry-academic cooperation in which students and companies participate together. In addition to your projects, if you are interested in other companies' topics, you can have an integrated perspective on discovering and promoting innovative tasks for future growth", he stressed. ---------------------------------Ignored----------------------------------- ▲ Participating companies - Vatech, Viewmagine, Soynet, CurvSurf, Escare, Seuteuratiokoria, Mecha Solution, Libo, Didim 355. Vibe Company, Woongjin ThinkBig, Edu Tem, VTV, Auto Semantics, Shinhan Bank, Jade Apple Studio, Media Plus, Arspraxia, Cosmos Medic, I-tech Solution ▲ Supervising faculty - Professor Yu-Seong Kim, Professor Jae-Kwang Kim, Professor Jin-Young Park, Professor Hee-Seon Park, Professor Young-Ik Eom, Professor Ha-Young Oh, Professor Hong-Wook Woo, Professor Ju-Sik Lee, Professor Ji-Hyeong Lee, Professor Ho-Jun Lee, Professor Yu-Ho Jeon, Professor Yunkyung Jeong, Professor Jaehoon Jeong, Professor Junhee Choi, Professor Young-Sook Hwang (Ascending order) ▲ Winner < Innovative Award - Challenge Award> - Auto Semantics (Representative: Naru Kang) Team - Project Subject Title: Development of an occupancy counting imaging system for optimizing the amount of energy in the sun - Team leader: Se-hwan Park / Team members: Tae-young Kim, Young-hwan Shin, Jun Kwon, Jae-won Lee - Advisor: Professor Yu-Seong Kim, Professor Young-sook Hwang < Creative Idea Award> - Woongjin ThinkBig (Representative: Jaejin Lee) Team - Project topic name: AI-based online Video Class Intrusion Measurement Solution Development - Team Leader: Seong-wan Park / Team members: Jin-young Lee, Si-Yeol Choi, Hyo-Je Seong, Kwan-woo Lee - Advisor: Professor Hee-sun Park [Link to online article] Professor newspaper Edu Donga Maeil Business TV energy economy electronic newspaper Korea Instructor Newspaper SEN Seoul Economic TV Veritas Alpha Confucian newspaper

-

- 작성일 2021-10-12

- 조회수 589

-

- [Research] Professor Woo Simon’s Research Lab Publishes a Paper in The ACMMM 2021

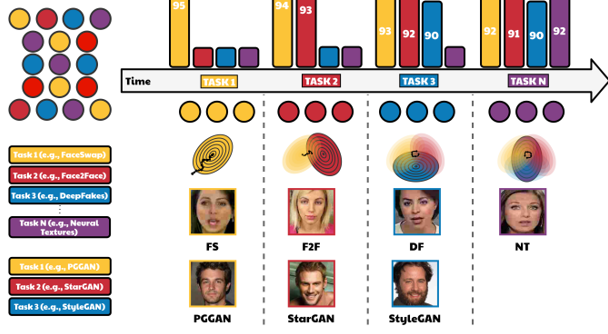

- Research work from Professor Woo and his Data-driven AI Security HCI (DASH Lab) research lab's students Minha Kim and Shahroz Tariq was accepted in top tier multimedia computer science conference, the ACM Multimedia conference (ACMMM 2021) (BK IF=4). The work will be presented in October 2021 in Chengdu, China. In this work, the authors propose a method to detect fake media such as deepfakes and synthetic face images, which have recently emerged as a significant social issue. It covers deep learning-based algorithms such as Continual Learning, Knowledge Distillation, and Representation Learning that can efficiently detect not only previous generation techniques of Deepfakes but also current ones. Previously, while there have been methods to detect deepfake video and GAN-generated images with high performance, new generation techniques of Deepfake and GAN are diversifying. Accordingly, it requires countermeasures to detect these new manipulation techniques while remaining effective on old ones. However, general methods to detect deepfake videos and GAN images require a large amount of training data for each generation technique, which is realistically constrained and also takes a long time to train for the model. The authors proposed a domain-adaptive transfer learning method to address these problems, which requires a small subset of data used for old tasks (source dataset) to prevent knowledge forgetting of the model. However, in practice, long-term preservation is challenging, and retraining source domain data may raise privacy concerns. Therefore, in this work, they developed CoReD algorithm, a continual learning-based approach using representation learning and knowledge distillation. As a result, they demonstrated effective performance improvement for target domains while maintaining detection accuracy on the source domain using CoReD algorithm as compared to other baselines.

-

- 작성일 2021-07-16

- 조회수 895

-

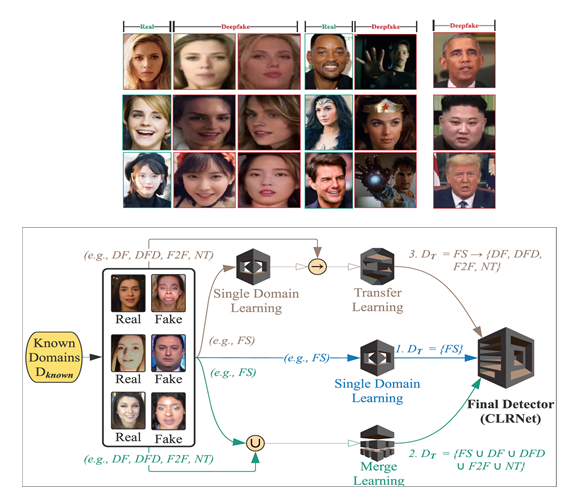

- [Research] Professor Woo Simon’s Research Lab Publishes a Paper in The Web Conference 2021

- Professor Woo Simon’s Research Lab Publishes a Paper in The Web Conference 2021 A paper from Professor Woo and his DASH (Data-driven AI Security HCI http://dash.skku.edu/) research lab's Ph.D. students Tariq Shahroz and Sangyup Lee got accepted into one of the top conferences in Web/Data Mining, The Web Conference (WWW) 2021 (BK IF=4). The work will be presented in April. In this work, they propose a deep learning-based (using Convoluntary LSTM, Transfer Learning, and Domain Adaptation techniques) algorithm to detect Deepfakes efficiently. The generation of Deepfakes is susceptible to potential abuse; many people with malicious intentions have taken advantage of these methods to generate fake female celebrity videos. However, detecting these deepfakes or forged images/videos is challenging due to the lack of data. Also, designing a generalized classifier that performs well universally on different types of deepfakes is what we desperately need today. To prevent potential abuse caused by such deepfakes, this work introduces a Convolutional LSTM-based Residual Network (CLRNet), which applies a unique model training procedure and explores spatial and temporal information in deepfakes. The proposed model obtained a performance improvement over state-of-the-art deepfake detection models and validated its performance with the Deepfake-in-the-wild dataset. [Title] “One Detector to Rule Them All: Towards a General Deepfake Attack Detection Framework”, The Web Conference 2021 (WWW 2021) Deep learning-based video manipulation methods have become widely accessible to the masses. With little to no effort, people can quickly learn how to generate deepfake (DF) videos. In particular, females have been occasional victims of deepfake, which are widely spread on the Web. While deep learning-based detection methods have been proposed to identify specific types of DFs, their performance suffers for other types of deepfake methods, including real-world deepfakes, on which they are not sufficiently trained. In other words, most of the proposed deep learning-based detection methods lack transferability and generalizability. Beyond detecting a single type of DF from benchmark deepfake datasets, we focus on developing a generalized approach to detect multiple types of DFs, including deepfakes from unknown generation methods such as DeepFake-in-the-Wild (DFW) videos. To better cope with unknown and unseen deepfakes, we introduce a Convolutional LSTM-based Residual Network (CLRNet), which adopts a unique model training strategy and explores spatial as well as the temporal information in a deepfakes. Through extensive experiments, we show that existing defense methods are not ready for real-world deployment. Whereas our defense method (CLRNet) achieves far better generalization when detecting various benchmark deepfake methods (97.57% on average). Furthermore, we evaluate our approach with a high-quality DeepFake-in-the-Wild dataset, collected from the Internet containing numerous videos and having more than 150,000 frames. Our CLRNet model demonstrated that it generalizes well against high-quality DFW videos by achieving 93.86% detection accuracy, outperforming existing state-of-the-art defense methods by a considerable margin.

-

- 작성일 2021-01-25

- 조회수 957

- About Us

- Undergraduate

-

Graduate

- Department of Computer Science and Engineering

- Department of Artificial Intelligence

- School of Convergence Security

- Department of Intelligent Software

- Department of AI system Engineering

- Department of Interaction Science

- Department of Applied Data Science

- Department of Applied Artificial Intelligence

- Department of Immersive Media Engineering

- Department of Smart Factory Convergence

- Department of Human-Artificial Intelligence Interaction (Jointy Managed)

- Department of Electrical and Computer Engineering (Jointy Managed)

- Department of DMC Engineering (Jointy Managed)

- Department of Semiconductor and Display Engineering (Jointy Managed)

- Professors

- Research

- Student

- Campus Life